Building Foundations for NLP Research and Learning

Why Learn NLP with NLTK?

When students begin their journey into Natural Language Processing (NLP), they need a tool that helps them:

This is exactly where NLTK (Natural Language Toolkit) becomes powerful.

What is NLTK?

NLTK (Natural Language Toolkit) is a Python library designed for:

It provides:

Easy-to-use APIs

Pre-built datasets (corpora)

Linguistic tools

Educational resources

Unlike modern libraries like spaCy, NLTK focuses more on:

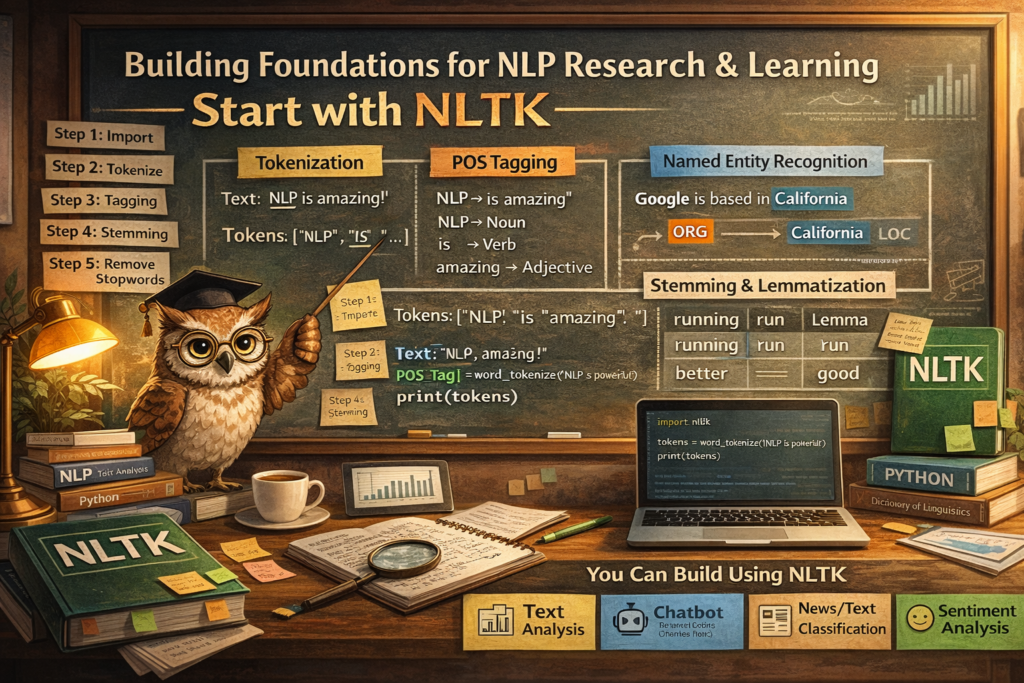

Core NLP Tasks in NLTK

Let’s break down the most important building blocks:

Tokenization (Breaking Text into Pieces)

Tokenization splits text into smaller units:

Words

Sentences

Example:

Text: "NLP is amazing!"

Tokens: ["NLP", "is", "amazing", "!"]

Part-of-Speech (POS) Tagging

Assigns grammatical roles:

Noun

Verb

Adjective

Example:

"NLP is amazing"

NLP → Noun

is → Verb

amazing → Adjective

Named Entity Recognition (NER)

Identifies real-world entities:

Person names

Locations

Organizations

Example:

"Google is based in California"

Google → Organization

California → Location

Stemming & Lemmatization

Reduces words to base form:

| Word | Stem | Lemma |

|---|---|---|

| running | run | run |

| better | better | good |

Stopword Removal

Removes common words:

is, the, and, in

NLTK Workflow (Step-by-Step)

Here’s how NLP typically works using NLTK:

Step 1: Import Library

import nltk

Step 2: Download Resources

nltk.download('punkt')

nltk.download('averaged_perceptron_tagger')

Step 3: Tokenization

from nltk.tokenize import word_tokenize

text = "NLP is powerful"

tokens = word_tokenize(text)

print(tokens)

Step 4: POS Tagging

from nltk import pos_tag

print(pos_tag(tokens))

Step 5: Stopword Removal

from nltk.corpus import stopwords

stop_words = set(stopwords.words('english'))

filtered = [w for w in tokens if w not in stop_words]

Step 6: Stemming

from nltk.stem import PorterStemmer

stemmer = PorterStemmer()

print([stemmer.stem(w) for w in tokens])

What You Can Build Using NLTK

Even though it’s educational, you can still build:

Text Analyzer

Word frequency

Keyword extraction

Sentence structure

Chatbot (Rule-Based)

Pattern matching

Intent recognition (basic)

News/Text Classifier

Categorize text (sports, politics, tech)

Sentiment Analysis (Basic)

Positive / Negative classification

Why NLTK is Important for Students

Most beginners make a mistake:

Without understanding:

Tokenization logic

Grammar structure

Text normalization

Linguistic rules

NLTK helps you:

NLTK vs Modern NLP Libraries

| Feature | NLTK | spaCy |

|---|---|---|

| Purpose | Learning & Research | Production |

| Speed | Slower | Very Fast |

| Ease | Beginner-friendly | Developer-friendly |

| Control | High (manual) | Automated pipeline |

Best Way to Learn NLTK

For your students, follow this approach:

Start with Basics

Start with Basics

Tokenization

POS tagging

Stopwords

Move to Processing

Move to Processing

Stemming

Lemmatization

Frequency analysis

Build Mini Projects

Build Mini Projects

Text summarizer

Spam classifier

Chatbot

Then Upgrade

Then Upgrade

spaCy

Transformers

LLMs

NLTK is not just a library.

If you master NLTK:

If you want to truly understand NLP:

Happy Learning!