State-of-the-Art Pretrained Models: BERT, GPT & More

Introduction

Natural Language Processing (NLP) has evolved significantly over the past decade. Earlier approaches relied on rule-based systems and statistical models, which often struggled to understand context and meaning in human language.

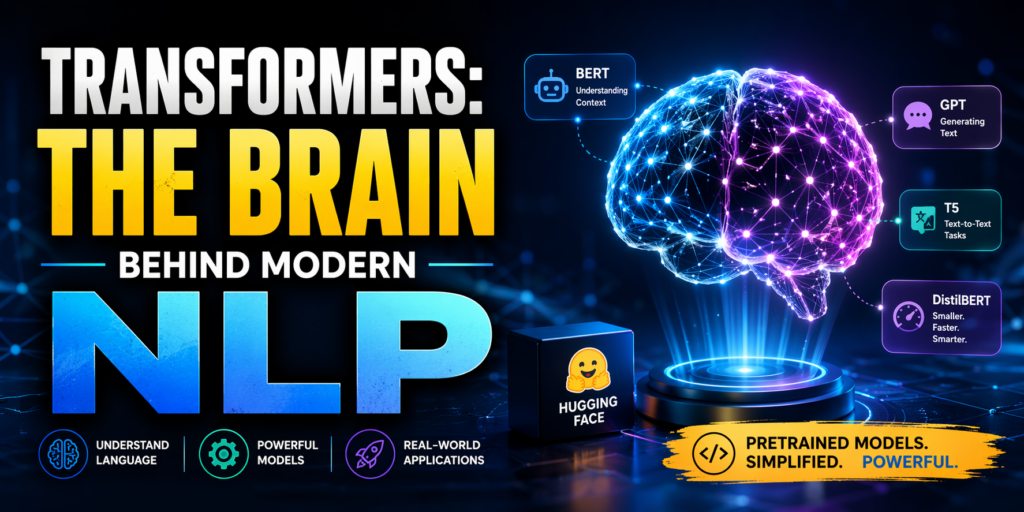

The introduction of the Transformer architecture marked a major breakthrough. Transformers enabled models to process language more efficiently and understand context at a much deeper level, leading to state-of-the-art performance across a wide range of NLP tasks.

What is Hugging Face?

Hugging Face is a leading organization in the field of artificial intelligence that provides tools and resources for working with transformer-based models.

Its most widely used library is the Transformers (Hugging Face library), which offers:

-

Access to thousands of pretrained models

-

Simple APIs for quick implementation

-

Support for popular deep learning frameworks like PyTorch and TensorFlow

This library allows developers and students to use powerful NLP models without building them from scratch.

Understanding Transformer Models

Transformers are deep learning models designed to process sequential data such as text. Unlike earlier models, they do not rely on recurrence (like RNNs) or convolution. Instead, they use an attention mechanism to understand relationships between words in a sentence.

This enables:

-

Better handling of long-range dependencies

-

Faster training through parallel processing

-

Improved contextual understanding

Key Pretrained Models

1. BERT (Bidirectional Encoder Representations from Transformers)

BERT is designed to understand the meaning of text by considering context from both directions (left and right).

Key Characteristics:

-

Deep bidirectional understanding

-

Strong performance in comprehension tasks

Common Use Cases:

-

Sentiment Analysis

-

Question Answering

-

Text Classification

2. GPT (Generative Pretrained Transformer)

GPT focuses on generating coherent and contextually relevant text.

Key Characteristics:

-

Unidirectional (left-to-right) processing

-

Excellent for generative tasks

Common Use Cases:

-

Chatbots and conversational AI

-

Content generation

-

Code generation

3. Other Important Models

-

RoBERTa – An optimized version of BERT with improved training techniques

-

T5 – Treats every NLP task as a text-to-text problem

-

DistilBERT – A smaller and faster version of BERT

How Transformers Work

At the core of transformer models is the attention mechanism, which allows the model to focus on the most relevant words in a sentence.

For example:

“The animal didn’t cross the road because it was tired.”

A transformer can correctly identify that “it” refers to “the animal”, not the road. This level of contextual understanding is what makes transformers highly effective.

Using Hugging Face Transformers

One of the biggest advantages of Hugging Face is its simplicity. Below is a basic example using Python:

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

result = classifier("I love learning NLP with transformers!")

print(result)

This code performs sentiment analysis using a pretrained model without requiring complex setup or training.

Applications of Transformers in NLP

Transformer models are widely used in real-world applications, including:

-

Text classification

-

Named Entity Recognition (NER)

-

Machine translation

-

Text summarization

-

Question answering systems

-

Conversational AI

Advantages of Transformers

-

High accuracy and performance

-

Ability to leverage pretrained models (transfer learning)

-

Efficient handling of large-scale data

-

Flexibility across multiple NLP tasks

Challenges

Despite their advantages, transformers also have some limitations:

-

High computational requirements

-

Large model sizes

-

Increased cost for training and deployment

Real-World Impact

Transformer-based models are used in many modern applications, such as:

-

Search engines

-

Virtual assistants

-

Recommendation systems

-

Automated content generation

They have become the foundation of many AI-powered systems used today.

Conclusion

Transformers have fundamentally transformed the field of NLP by enabling machines to understand and generate human language more effectively.

With tools like Transformers (Hugging Face library), students and developers can quickly build powerful NLP applications using pretrained models such as BERT and GPT.

Learning these technologies is essential for anyone looking to work in AI, machine learning, or modern software development.

Happy Learning!