Real-world datasets are full of categorical variables:

City: Mumbai, Pune, Delhi

Education: Graduate, Post-Graduate

Product Type: Electronics, Clothing, Grocery

Browser: Chrome, Safari, Firefox

However, machine learning models work with numbers, not text.

This is where Category Encoders come into play.

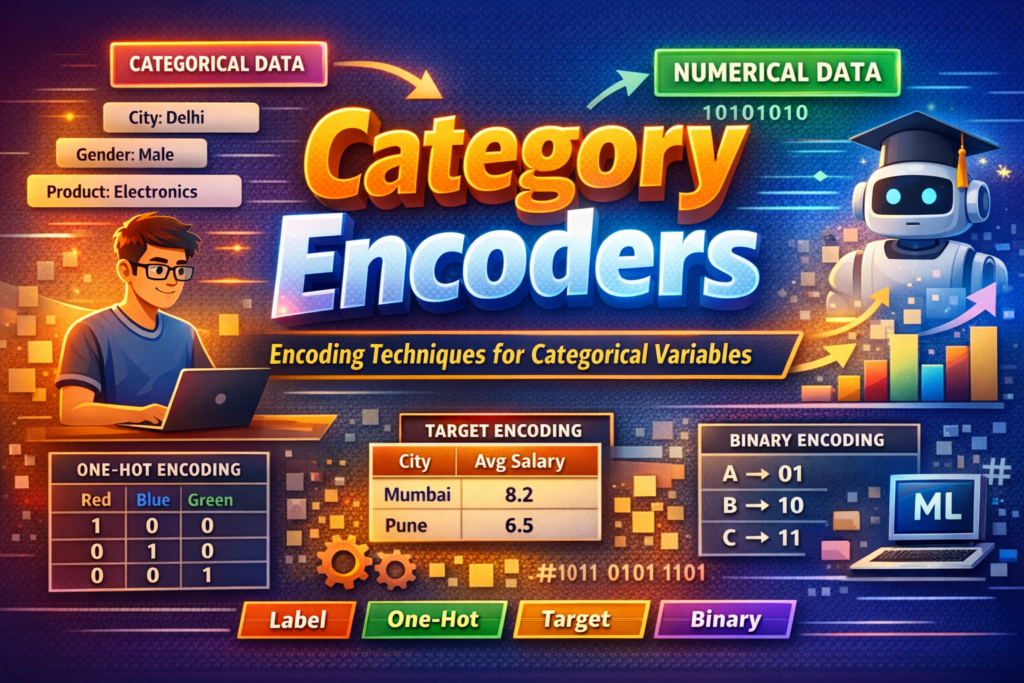

Category Encoding is the process of converting categorical (text/label-based) data into numerical representations that ML algorithms can understand.

This article explains:

Why encoding is necessary

Popular encoding techniques

When to use which encoder

Pitfalls to avoid

Best practices used in industry

Types of Categorical Variables

Before choosing an encoder, understand your data type:

1️⃣ Nominal Categories (No Order)

Examples:

Gender: Male, Female

City: Delhi, Pune, Chennai

No inherent ranking

2️⃣ Ordinal Categories (Ordered)

Examples:

Education: High School < Graduate < Post-Graduate

Rating: Poor < Average < Good < Excellent

Order matters

Why Category Encoding Is Important

Without encoding:

Models cannot compute distances or splits

Algorithms may crash or behave unpredictably

Proper encoding helps:

Improve model accuracy

Reduce bias and overfitting

Handle high-cardinality features

Build scalable ML pipelines

Common Category Encoding Techniques

1️⃣ Label Encoding

What it does:

Assigns each category a unique integer.

| Category | Encoded |

|---|---|

| Red | 0 |

| Blue | 1 |

| Green | 2 |

Pros

Simple and fast

Memory efficient

Cons

Introduces false order

Dangerous for nominal data

Best Use Case

Ordinal variables with real ranking

2️⃣ One-Hot Encoding (OHE)

What it does:

Creates a new binary column for each category.

| City | Delhi | Pune | Chennai |

|---|---|---|---|

| Delhi | 1 | 0 | 0 |

| Pune | 0 | 1 | 0 |

Pros

No ordering bias

Works well with linear models

Cons

Curse of dimensionality

Poor performance with many unique values

Best Use Case

Nominal features with low cardinality

3️⃣ Ordinal Encoding

What it does:

Maps categories based on logical order.

| Education | Encoded |

|---|---|

| High School | 1 |

| Graduate | 2 |

| Post-Graduate | 3 |

Pros

Preserves ranking

Compact representation

Cons

Incorrect ordering leads to wrong predictions

Best Use Case

Ordinal variables with clear hierarchy

4️⃣ Target Encoding (Mean Encoding)

What it does:

Replaces categories with mean of target variable.

| City | Avg Salary |

|---|---|

| Mumbai | 8.2 |

| Pune | 6.5 |

Pros

Handles high-cardinality features

Powerful for tree-based models

Cons

Risk of data leakage

Overfitting if not regularized

Best Use Case

Large datasets

High-cardinality categorical features

5️⃣ Frequency / Count Encoding

What it does:

Encodes categories based on frequency.

| Browser | Frequency |

|---|---|

| Chrome | 0.65 |

| Safari | 0.20 |

| Firefox | 0.15 |

Pros

Simple

No dimensional explosion

Cons

Loses category semantics

Best Use Case

When frequency itself is informative

6️⃣ Binary Encoding

What it does:

Combines label encoding + binary representation.

Example:

Category → Label → Binary

A → 1 → 001

B → 2 → 010

C → 3 → 011

Pros

Reduces dimensions

Efficient for high cardinality

Cons

Less interpretable

Best Use Case

High-cardinality categorical variables

7️⃣ Hashing Encoding

What it does:

Applies a hash function to categories.

Pros

Extremely memory efficient

No need to store mapping

Cons

Hash collisions

Not human-readable

Best Use Case

Streaming data

Very large datasets

Category Encoders in Python

Most encoders are available in:

scikit-learn

category_encoderspackage

Common encoders:

OneHotEncoder

OrdinalEncoder

TargetEncoder

BinaryEncoder

HashingEncoder

Choosing the Right Encoder (Quick Guide)

| Scenario | Recommended Encoder |

|---|---|

| Small categories, nominal | One-Hot |

| Ordered categories | Ordinal |

| High cardinality | Target / Binary |

| Streaming / Big data | Hashing |

| Tree-based models | Target / Frequency |

Common Mistakes to Avoid

❌ Label encoding nominal features

❌ Applying target encoding before train-test split

❌ One-hot encoding high-cardinality columns

❌ Ignoring unseen categories in production

Best Practices (Industry-Ready)

✔ Always split data before encoding

✔ Use pipelines for reproducibility

✔ Regularize target encoding

✔ Handle unknown categories gracefully

✔ Evaluate encoding impact using cross-validation

Real-World Example

In an e-commerce ML model:

Product Category → One-Hot

City → Target Encoding

User ID → Hashing

Rating Level → Ordinal Encoding

This hybrid strategy balances accuracy + performance

Key Takeaways

Encoding is not optional in ML

No single encoder fits all problems

Understanding data type + model is crucial

Proper encoding can dramatically improve results